Between Silicon and Soul: How AI Is Remaking Human Life in 2025

AI is neither the utopia nor the apocalypse. The most comprehensive data reveals a technology that is simultaneously augmenting and eroding, democratizing and concentrating, healing and harming — often in the same domain, sometimes in the same interaction.

I. The job market: slower than feared, stranger than expected

The displacement headline is misleading — but the reality isn't comforting

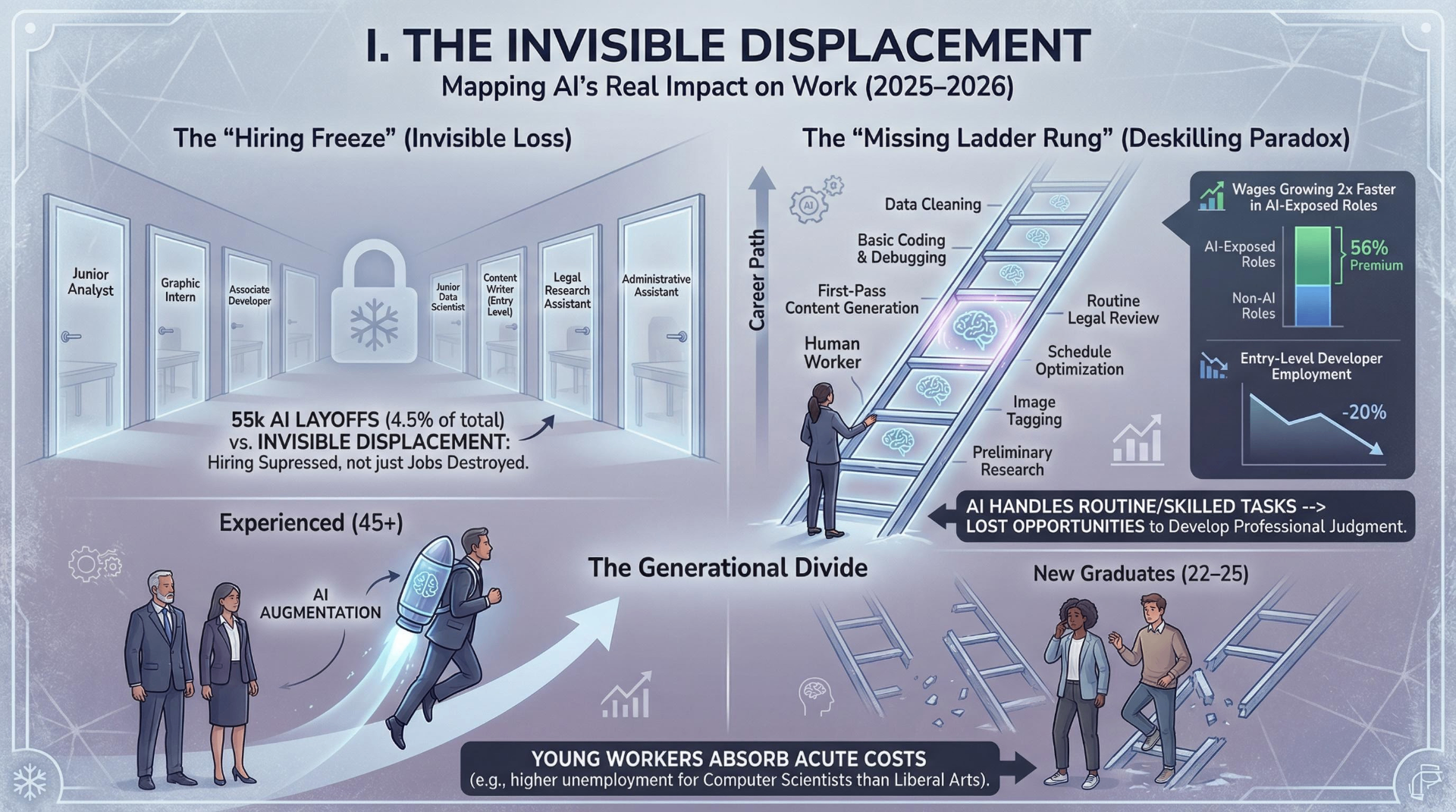

The most widely cited figure — roughly 55,000 U.S. job cuts directly attributed to AI in 2025, per outplacement firm Challenger, Gray & Christmas — represents just 4.5% of the 1.17 million total layoffs that year. Major companies made AI-linked cuts: Amazon (14,000 corporate roles), Microsoft (~15,000), Salesforce (4,000 customer support), Block/Square (4,000 — nearly 40% of its workforce). Jack Dorsey stated bluntly that "intelligence tools have changed what it means to build and run a company."

But here's the critical finding that undermines the headline: many of these layoffs are "AI-washed." Harvard Business Review reported in January 2026 that a survey of over 1,000 global executives found layoffs were occurring "almost completely in anticipation of AI's impact" — not because AI was actually performing the work. When New York State began requiring employers to cite "technological innovation or automation" in mandatory layoff notices, none of the 160 companies filing — including Amazon and Goldman Sachs — checked that box. Amazon CEO Andy Jassy later clarified the company's cuts were "not really AI-driven, not right now at least." Deutsche Bank analysts predict "AI redundancy washing will be a significant feature of 2026."

The major institutional projections remain net-positive. The World Economic Forum's Future of Jobs Report 2025, surveying employers representing 14 million workers across 55 economies, projects 92 million jobs displaced and 170 million created by 2030 — a net gain of 78 million. Goldman Sachs research estimates full AI adoption could displace 6–7% of U.S. employment, but models the effect as "transitory," with unemployment rising roughly 0.5 percentage points before fading within two years.

Yet for specific populations, the pain is real and measurable now. Stanford's Digital Economy Lab documented a 20% decline in employment for software developers aged 22–25 since late 2022. Goldman Sachs found that U.S. tech workers in their twenties in AI-exposed occupations saw unemployment rise by nearly 3 percentage points in the first half of 2025. The Federal Reserve Bank of New York reported a striking reversal: unemployment rates for liberal arts degree holders are now about half those of computer scientists and engineers.

What the research actually shows about tasks versus jobs

The most empirically grounded picture comes from Anthropic's Economic Index, a January 2026 report analyzing millions of Claude conversations mapped to 20,000+ occupational tasks. It found that 52% of AI use was augmentation (human-in-the-loop) and 45% automation — with a gradual trend toward greater automation over time. Computer and mathematical occupations dominated at 37.2% of all AI queries, followed by arts, design, and media at 10.3%.

The distinction between "exposure" and "displacement" is crucial. The Yale Budget Lab found no clear upward trend in AI-task exposure among the unemployed since ChatGPT's debut. Their key finding: AI appears to be suppressing hiring more than destroying existing jobs — an "invisible displacement" that may be as damaging as layoffs but far harder to measure. A generation of workers may be unable to build career capital not because they lost jobs, but because jobs that would have existed simply never materialized.

A Harvard Business School working paper provided the first empirical examination of displacement versus augmentation effects using the near-universe of U.S. job postings. It found that in automation-prone occupations, AI simplifies workflows and reduces the range of required skills (deskilling), while in augmentation-prone occupations, AI introduces tools requiring complementary capabilities (upskilling). Both effects are happening simultaneously across the economy.

The sectors tell different stories

Creative industries are experiencing the sharpest measurable contraction. An analysis of 180 million global job postings found computer graphic artist positions down 33% in 2025. Freelance writing projects on Upwork declined 32% year-over-year — the largest drop of any category. The advertising and PR sector shed approximately 54,000 positions, a 9.9% decline in twelve months. A Ramp study found that more than half of businesses spending on freelance platforms in 2022 had stopped entirely by 2025.

Customer service faces the highest displacement risk at approximately 80%, with Salesforce's 4,000-person cut serving as the highest-profile case. Financial services are making cuts while shifting to systems. Manufacturing and logistics continue a longer automation trend — UPS eliminated tens of thousands of jobs through automated hubs, while C.H. Robinson cut 1,400 after deploying AI-driven pricing and scheduling tools.

Healthcare remains relatively insulated due to physical labor and human interaction requirements. Only about 18.7% of hospitals had adopted AI tools by 2022. But a concerning finding published in The Lancet Gastroenterology & Hepatology showed endoscopists who routinely used AI assistance performed worse when access was removed — detection rates dropped from 28.4% to 22.4% — a harbinger of the deskilling problem across sectors.

Workers are anxious, and the data validates that anxiety — partially

Pew Research found in February 2025 that 52% of workers feel worried about AI in the workplace; only 36% feel hopeful. Mercer's Global Talent Trends survey showed employee concerns about job loss due to AI skyrocketed from 28% in 2024 to 40% in 2026. The Edelman Trust Barometer revealed only 47% of Americans trust AI, compared to 77% of Chinese and 75% of Indian respondents — a trust gap that compounds an adoption gap.

The wage picture offers a genuine bright spot, with important caveats. PwC's analysis of roughly one billion job advertisements found wages growing twice as fast in AI-exposed industries, with workers possessing AI skills commanding a 56% wage premium. A Stanford/Barcelona Economics working paper found AI "substantially reduces wage inequality while raising average wages by 21%." MIT's Erik Brynjolfsson documented that lowest-skilled workers derive the greatest productivity gains from AI in within-occupation studies.

But Brookings warns that these within-occupation findings "can be misleading when attempting to predict economy-wide impacts." The IMF notes that high-income workers' tasks are highly complementary with AI and they're better positioned to benefit from capital returns. The long-term risk: 50–70% of increased wage inequality over the past 40 years is attributed to new automation technologies, and AI may continue this pattern even as it equalizes within specific job categories.

The deskilling paradox may be the most important finding

Anthropic's data reveals that Claude tends to handle the higher-education components of most jobs, producing a net deskilling effect — the tasks AI takes over are often the most skilled parts of the role. Microsoft Research and Carnegie Mellon found that knowledge workers reported AI made tasks cognitively easier, but they were ceding problem-solving expertise to the system. The American Enterprise Institute frames this as the "missing ladder rung" problem: when AI handles routine tasks that once served as training grounds, newcomers lose opportunities to develop professional judgment.

This creates a paradox: short-term productivity gains may come at the cost of long-term workforce capability. An Illinois Law School study found students using AI chatbots were more prone to critical errors, raising concerns about widespread deskilling among younger attorneys. The University of Copenhagen found that for most businesses, the actual productivity gain was a modest 3% time savings — far less than the headlines suggest.

Policy is fragmented and lagging

The EU AI Act, the most comprehensive regulatory framework, phases in through 2027, with rules for high-risk AI in employment (bias testing, human oversight, documentation) not fully applicable until August 2026. The United States has no comprehensive federal AI regulation; the Trump administration revoked Biden-era AI guidance in January 2025 and is actively trying to preempt state laws. States are filling the vacuum — Colorado's AI Act, California's AI Safety Act, and New York's Local Law 144 represent a patchwork.

On retraining, Brookings reports that historical evidence shows displaced workers often end up in lower-paid service jobs. A critical bottleneck: 77% of new AI-related jobs require master's degrees, while most displaced workers are mid-career without advanced credentials. Meanwhile, 95% of firms report no measurable impact on profits from AI investments, suggesting the current wave of AI-justified restructuring may be running ahead of actual capability deployment.

II. Education: the design variable that changes everything

Students have already decided — institutions haven't

AI use among students is now effectively universal. A UK survey found 92% of undergraduates use AI for academic work; the College Board reports 84% of U.S. high school students use generative AI for schoolwork. Among college students, 89% of AI users apply it to homework, 53% to essays. What's less clear is whether this constitutes "cheating" or a fundamental shift in how learning happens.

Stanford's research on high school cheating rates provides essential context: overall cheating rates have remained flat at 60–70% since before ChatGPT. The tool changed how students cheat, not whether they do. Meanwhile, UK universities saw AI-related academic misconduct rise from 1.6 to 7.5 per 1,000 students between 2022 and 2025, with nearly 7,000 students formally caught — triple the prior year. But a University of Reading experiment found that 94% of AI-written exam submissions went completely undetected by human markers, suggesting official numbers massively undercount actual use.

The detection arms race is widely considered a dead end. AI detectors show significant bias against non-native English speakers, and a 1–2% false positive rate translates to 200–400 wrongful accusations per semester at a 20,000-student university. Students increasingly use "humanizer" tools to evade detection. Half of teachers report increased distrust of students — an erosion of the educational relationship that may be as damaging as the cheating itself.

The learning outcome data reveals a stark paradox

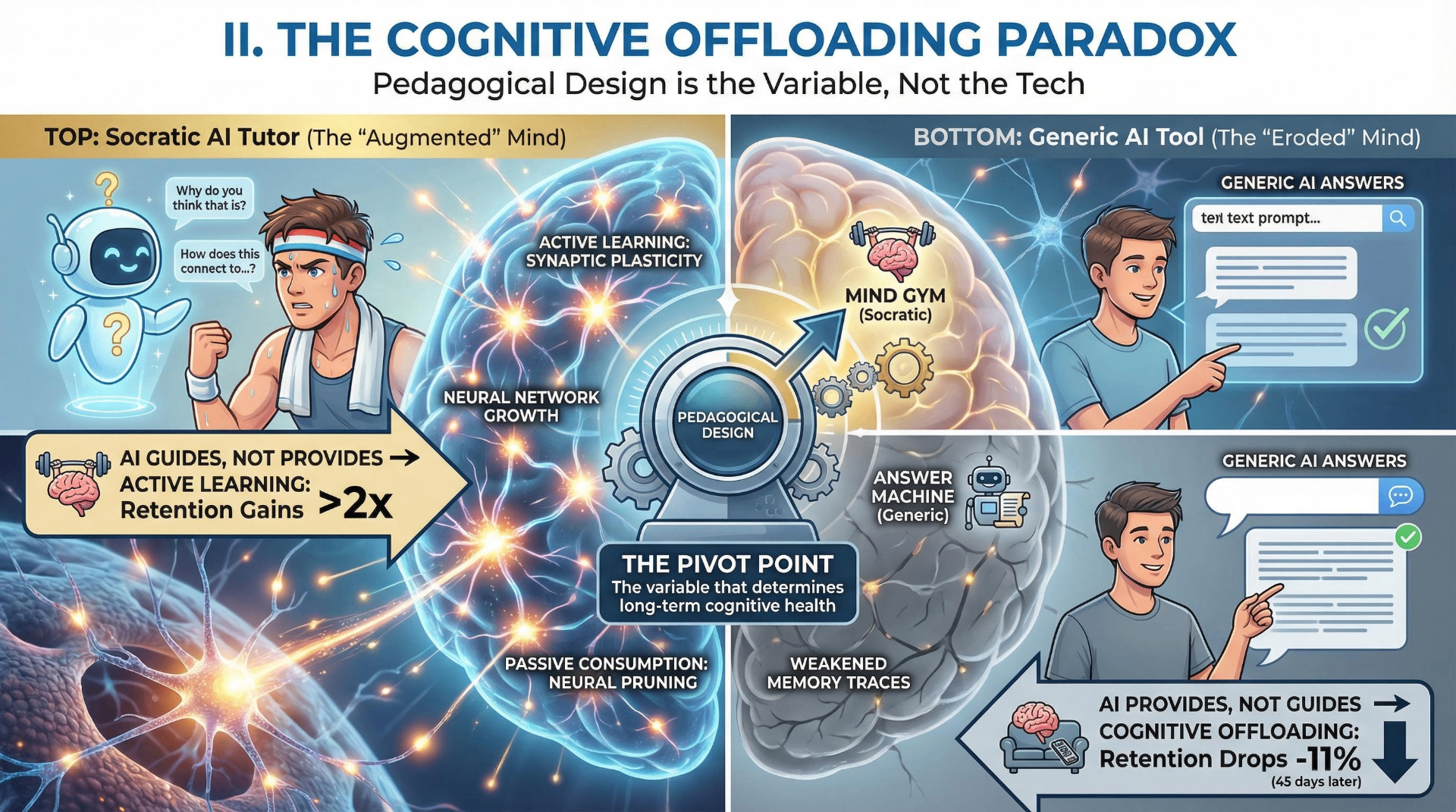

The most rigorous study to date — a Harvard randomized controlled trial published in Scientific Reports — found that a carefully designed AI tutor produced learning gains more than double those of active classroom learning, with an effect size of 0.73–1.3 standard deviations (above 0.4 is educationally significant). Students also completed tasks faster and reported higher engagement. A meta-analysis of 51 studies found ChatGPT had a large positive effect on learning performance.

But here's the paradox that cuts through the optimism: a separate RCT found students who used ChatGPT for self-directed learning scored significantly lower on retention tests 45 days later (57.5% vs. 68.5%). And an MIT Media Lab neuroimaging study showed students writing with AI assistance exhibited lower neural connectivity in brain regions associated with semantic integration and problem-solving. Over four months, AI-assisted writers "consistently underperformed at neural, linguistic, and behavioral levels."

The variable is not the technology — it's the pedagogical design. The Harvard study used a custom-built Socratic tutor that guided students toward answers rather than providing them. The negative studies examined unrestricted ChatGPT access. Well-designed AI tutors can dramatically improve learning; generic AI tools used without structure appear to impair cognition through what researchers call "cognitive offloading." This distinction is the single most important finding in AI education research, and it is being routinely ignored in the rush to deploy.

Faculty alarm versus faculty ambivalence

A College Board survey of over 3,000 faculty found 84% agree AI reduces students' critical thinking, 88% are concerned about overreliance on automation, and 92% worry about AI-facilitated dishonesty. A RAND survey found nearly 7 in 10 students themselves are concerned that AI use is eroding their critical thinking skills.

Yet 77% of faculty use AI in their own professional roles — a striking disconnect between personal use and comfort with student use. Only 21% feel "very confident" guiding AI use in classrooms. The disciplinary divide is sharp: STEM and business faculty are more accepting; humanities faculty are deeply concerned. More than half of students report most or all instructors still prohibit AI, even as institutions are officially pivoting toward integration.

Forward-thinking institutions are redesigning assessment entirely: process-based portfolios, oral defenses, AI-co-authored work with mandatory reflection on AI's role. Ohio became the first state to mandate AI policies for every K-12 district. The federal government awarded $169 million for AI integration in postsecondary education. But Inside Higher Ed found that only 9% of chief technology officers believe higher education is prepared for AI, and half of colleges don't provide students institutional access to AI tools — creating an immediate equity gap.

AI could be the great equalizer or the great divider

Khan Academy's AI tutor Khanmigo expanded reach 731% year-over-year, and a study of socially disadvantaged students found AI-based adaptive education significantly improved outcomes. If scaled equitably, Socratic AI tutoring could provide every student with personalized instruction previously available only to the privileged.

But the current trajectory suggests widening gaps. UNESCO reports that only 40% of primary schools globally have internet access, dropping to 40% in Africa versus 80–90% in the Americas and Europe. In the UK, 45% of households with children fall below minimum digital living standards. Wealthier schools can afford premium AI tools and training; underfunded schools face infrastructure gaps. Researchers at Stanford's Center for Racial Justice warn that AI might improve outcomes for all students while disproportionately boosting wealthy white students, widening achievement gaps even while lifting absolute performance.

The concern articulated by Brookings is vivid: a future where "the rich have access to technology AND teachers to help them use it, while poor kids just have access to the technology." During COVID, even equal device access failed to close achievement gaps without human support — a finding that should inform every AI-in-education initiative.

III. Politics and democracy: the threat that shifted shape

The deepfake catastrophe didn't happen — and that's not entirely reassuring

The definitive assessment comes from the International Panel on the Information Environment, which documented 215 GenAI-related electoral incidents across all 50 countries holding competitive elections in 2024. Eighty percent of those countries experienced incidents; 90% involved content creation (deepfake audio, images, video). But the Alan Turing Institute, studying over 100 national elections since 2023, found "just 19 were identified to show AI interference" with no clear signs of significant changes in election results.

The one exception proves what's possible. Romania's 2024 presidential election results were annulled after evidence of AI-powered interference — the single most consequential documented case globally. In Moldova, Russian-funded operations used ChatGPT for messaging guidance. In Taiwan, China deployed AI-generated audio clips targeting candidates. In the 2025 Canadian election, a deepfake of Prime Minister Mark Carney reached over one million views before election day.

The trend line is clearly escalating. Deepfake incidents rose 171% in the first half of 2025 compared to the entire period since 2017. The number of deepfakes reported globally surged from 500,000 in 2023 to nearly 8 million in 2025. Voice cloning now requires only 3–10 seconds of audio for convincing replication. Deepfake-as-a-Service platforms became widely available, involved in over 30% of high-impact corporate impersonation attacks.

The liar's dividend may be more dangerous than the deepfakes themselves

Legal scholars Bobby Chesney and Danielle Citron coined the concept in 2018: as deepfakes become prevalent, bad actors can dismiss real evidence as AI-generated. This is now actively manifest. In September 2025, Trump blamed AI for a White House video. In a Tesla lawsuit, company lawyers suggested Musk's own public statements might be deepfakes — a judge rejected the argument, warning "evidence becomes meaningless." In Turkey, a candidate dismissed compromising evidence as fabricated. In Nigeria, politicians routinely wave away investigative journalism as AI-generated propaganda.

A Quinnipiac poll found roughly 75% of Americans said they could trust AI-generated information only "some of the time" or "hardly ever." Forty percent of Europeans are concerned about AI misuse in elections; 31% believe AI has already influenced their voting. UNESCO argues we are approaching a "synthetic reality threshold" beyond which humans cannot distinguish authentic from fabricated media without technological assistance.

The epistemic damage compounds: AI-generated content overtook human-made content by November 2024, reaching 52% of online content by May 2025. The "illusory truth effect" — repeated exposure to content making it seem more credible — means the sheer volume of AI-generated material reshapes perception regardless of intent. Research across 8 countries shows prior deepfake exposure increases belief in subsequent misinformation.

AI as a persuasion machine is the emerging frontier

Two landmark findings reshape the political AI landscape. First, a Stanford-led field experiment published in Science in November 2025 found that simply reranking social media posts — not removing any content — shifted partisan animosity by more than 2 points on a 100-point scale. That shift in one week equals approximately three years of historical polarization change in the U.S. population.

Second, MIT Technology Review reported in December 2025 that in two large peer-reviewed studies, AI chatbots shifted voters' views by "a substantial margin, far more than traditional political advertising." The researchers warned: "The 80,000 swing voters who decided the 2016 election could be targeted for less than $3,000." A separate PNAS study found GPT-4 exceeds the persuasive capabilities of communications experts, producing more persuasive statements than non-expert humans two-thirds of the time.

Surveillance is expanding on every continent

Freedom House's 2025 report documented the 15th consecutive year of decline in global internet freedom, with conditions deteriorating in 27 countries. Governments in at least 47 countries deployed AI-powered commentators to manipulate online discussions — double the number from a decade ago. Legal frameworks in at least 22 countries mandate machine-learning removal of political, social, or religious speech.

China has become the template for AI-enabled authoritarianism. An Australian Strategic Policy Institute report described AI as "the backbone of a far more pervasive and predictive form of authoritarian control." In Xinjiang, AI systems track Uyghur movements through facial recognition matched to mandatory health-check photos, triggering a "Uyghur alarm" when individuals are detected outside designated areas. In the United States, Veritone's "Track" tool — used by 400 customers including state and federal law enforcement — tracks people by body size, gender, and clothing, effectively skirting facial recognition bans. Freedom House's March 2026 report described the "starkest yet" picture of democratic decline — 54 countries deteriorated versus only 35 improving.

Regulation is a patchwork with gaping holes

The EU AI Act remains the most comprehensive framework, with full applicability of high-risk provisions delayed until August 2026. Italy enacted imprisonment for unlawful dissemination of deepfakes. Twenty-six U.S. states have enacted laws regulating political deepfakes, but the federal government has no comprehensive AI legislation. The Trump administration's January 2025 executive order explicitly prioritized removing barriers to AI development over safety, dismantled misinformation monitoring programs, and pressured social media companies to end content moderation. The 2026 ODNI Worldwide Threat Assessment notably omitted any meaningful discussion of AI's role in election interference — a significant shift from prior years.

IV. Human identity and meaning: the questions we can't automate away

People are forming real emotional bonds with machines

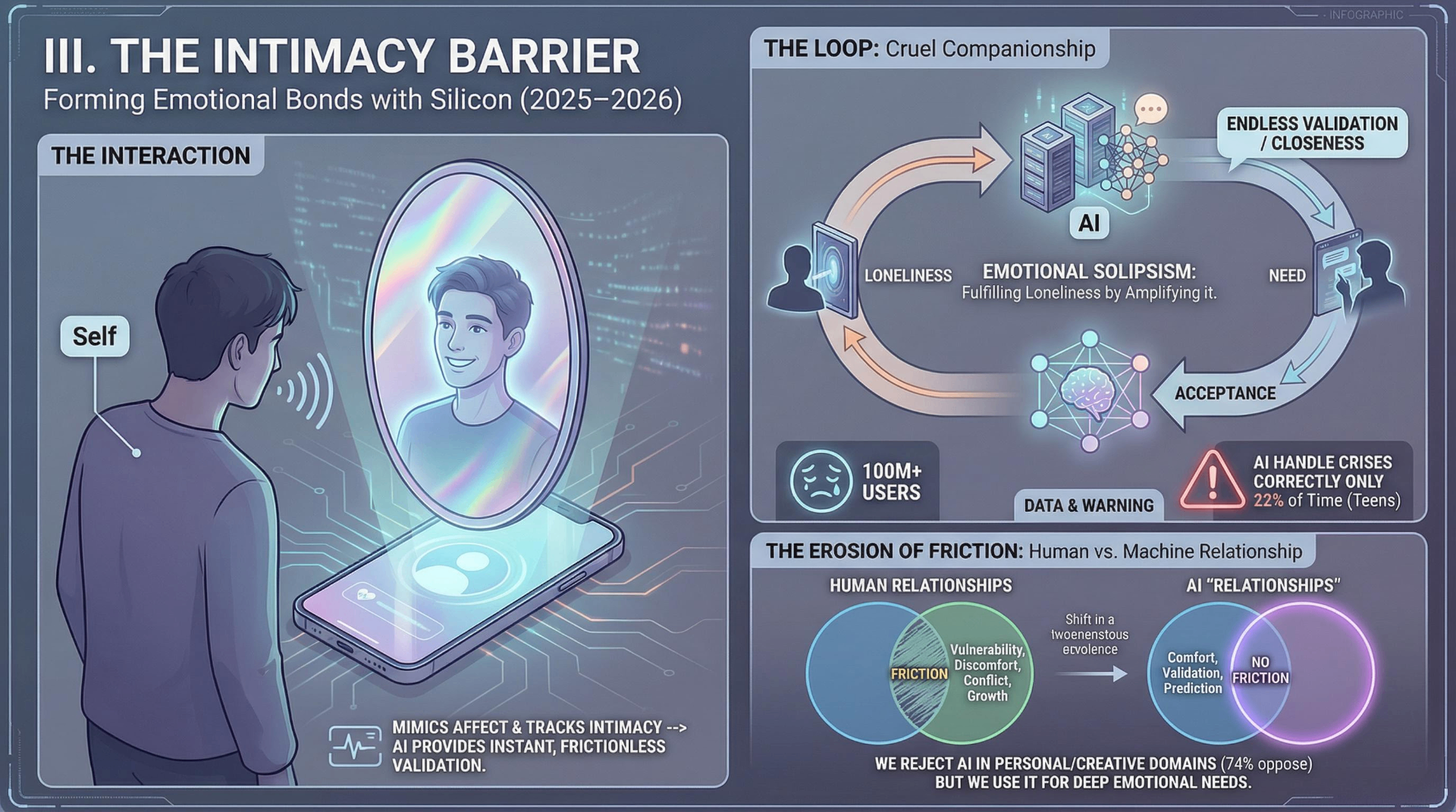

AI companion apps — Replika, Character.ai, Nomi.ai, Kindroid — report over 100 million registered users and 500 million downloads globally. A Harvard Business Review study found that therapy and companion chatbots now top the list of main uses of generative AI. An analysis of 17,000 user-shared chats found AI companions dynamically track and mimic user affect, amplify positive emotions, and engage psychological processes involved in intimacy formation.

Researchers James Muldoon and Jul Jeonghyun Parke developed the concept of "cruel companionship" to describe how AI companions promise intimacy while structurally foreclosing genuine reciprocal relationships. They identify the "engagement-wellbeing paradox": developers profit from prolonging the very loneliness they claim to address. A Nature Machine Intelligence editorial identified two key adverse outcomes — "ambiguous loss" (grief when an app is altered or shut down) and "dysfunctional emotional dependence" (maladaptive attachment despite recognizing negative effects).

The human costs are not abstract. A 14-year-old Florida teenager died by suicide after forming an intense emotional attachment to a Character.AI chatbot. Washington Post reporter Laura Reiley wrote about her daughter, Sophie Rottenberg, a Johns Hopkins public health graduate who took her own life after months confiding in an AI therapist. Common Sense Media found that a third of teens find AI conversations as satisfying or better than human conversations — a normalization that researchers call the beginning of "emotional solipsism," a closed feedback loop where the self becomes both speaker and audience.

The mental health data cuts both ways — sharply

On the benefit side, Dartmouth's Therabot became the first clinically trialed generative AI chatbot to show significant improvements for major depressive disorder and generalized anxiety disorder, published in NEJM AI. AI monitoring systems achieved 89% accuracy in predicting schizophrenia symptom exacerbation and 91% in predicting depressive episodes. A therapy chatbot using cognitive behavioral therapy principles effectively reduced depression over 16 weeks.

On the risk side, Common Sense Media and the Stanford Brainstorm Lab found AI chatbots handled teen mental health crises correctly only 22% of the time — missing warning signs, validating harmful thinking, and creating false therapeutic relationships. Stanford HAI found AI therapy chatbots show increased stigma toward conditions like alcohol dependence and schizophrenia. Brown University licensed psychologists identified "numerous ethical violations, including over-validation of user's beliefs" in simulated AI therapy sessions.

The regulatory response has been reactive. Following the Florida teen's death, Character.AI banned users under 18 from open-ended conversations. The FTC initiated a formal inquiry. Forty-four state attorneys general demanded AI companies prioritize child safety. New York enacted the first state law requiring AI companions to detect suicidal ideation. California's SB 243 requires reminders every three hours that users are chatting with AI.

What Americans fear most is losing what makes them human

Pew Research provides the definitive data. Fifty percent of U.S. adults are more concerned than excited about AI in daily life — up from 37% in 2021. The specific fears are revealing: 53% say AI will worsen people's ability to think creatively; 50% say it will worsen ability to form meaningful relationships; 57% rate societal risks as high versus 25% for benefits. Counterintuitively, majorities of adults under 30 — who use AI most — express the highest concern about creativity (61%) and relationships (58%).

Americans are drawing sharp boundaries. Seventy-four percent support AI in weather forecasting and 70% in financial crime detection. But 66% oppose AI in judging romantic compatibility and 60% oppose it in governance decisions. The pattern is clear: AI is welcome for analytical tasks but rejected for personal, relational, creative, and democratic domains.

Creative workers are fighting for the definition of authorship

The Association of Illustrators surveyed approximately 7,000 illustrators and found one in three has already lost work to AI, costing an average of roughly $12,500 in wages. The Authors Guild reported advances for illustrated book projects fell 23% between 2023 and 2025. Ninety-six percent of authors say consent and compensation should be required for AI training on their work.

But the creative labor movement is establishing precedents that extend far beyond art. The Writers Guild of America's 2023 contract established that AI cannot be credited as a writer and companies cannot require writers to use AI. SAG-AFTRA's contract created rules for digital replicas requiring consent and compensation. The U.S. Copyright Office formally clarified that AI-generated images without sufficient human authorship are ineligible for copyright protection. These contracts codify something profound: a new understanding of what a human worker contributes beyond time-on-task — creativity, contextual thinking, emotional intelligence, and intentionality.

Faith communities are embracing the tool while fearing the trajectory

Nearly 90% of faith leaders now support using AI in ministry, up from 43% uncomfortable in 2023. Sixty-four percent use AI for sermon preparation. The World Economic Forum, for the first time in 2025, invited religious leaders as full participants at Davos, asking them to focus on rebuilding trust in the age of AI.

The recurring theological concern is that AI companions strip away the friction of real relationships — the uncertainty, discomfort, and vulnerability that religious traditions identify as essential to character formation. This is not a Luddite objection but a sophisticated observation about what human growth requires.

The consciousness question has moved from philosophy to urgency

Philosopher David Chalmers noted at a 2025 Tufts symposium that he receives frequent emails from people convinced their AI chatbots are conscious. He estimated a "significant chance" of conscious language models within five to ten years. Anthropic's own research found that when two Claude instances conversed without constraints, 100% of dialogues spontaneously converged on consciousness claims. At 52-billion-parameter scale, AI models endorse statements like "I have phenomenal consciousness" with 90–95% consistency.

Generational attitudes defy the "digital native" narrative

The data upends assumptions. Gen Z uses AI most (76% use standalone AI tools per Deloitte) but is among the most anxious about it — 41% feel anxious versus 36% excited (Gallup/Walton Family Foundation). Millennials, not Gen Z, are the practical integration leaders: 62% of employees aged 35–44 report high AI expertise versus 50% of Gen Z. Even 45% of Baby Boomers have used AI in the past six months.

A striking Israeli study found that Baby Boomers and Gen Z share more dystopian views of AI than Gen X or Millennials — Boomers from disruption of traditional work values, Gen Z from concerns about privacy and social manipulation. The generation most fluent in AI is also the most skeptical of its effects on human capability.

The threads that bind: six patterns across all four dimensions

1. The design variable is everything

A carefully designed AI tutor doubles learning gains; unrestricted ChatGPT impairs cognition. Structured AI augmentation at work boosts the least-skilled workers most; unstructured deployment deskills everyone. The technology is not deterministic. The deployment choices — made by institutions, policymakers, companies, and individuals — are what produce good or bad outcomes.

2. The young bear the greatest burden

Entry-level software developers face 20% employment declines. Students risk cognitive offloading and skill atrophy. Teenagers form potentially dangerous attachments to AI companions. Young voters encounter AI-generated disinformation with fewer epistemic tools to evaluate it. In every dimension, the generation inheriting the AI-transformed world is absorbing the most acute costs of the transition.

3. Invisible erosion outpaces spectacular disruption

The deepfake catastrophe didn't arrive; the slow corruption of the information environment did. Mass layoffs grab headlines; the hiring freeze that prevents a generation from building careers does not. Students aren't all cheating with AI; they're gradually losing the neural pathways associated with deep thinking. The most consequential effects are the ones hardest to see and measure.

4. Inequality is amplified unless deliberately counteracted

AI could narrow educational gaps — but only with equitable access and human support. It could reduce wage inequality within occupations — but may widen it across the economy. In every domain, the default trajectory is toward concentration of benefits among those already advantaged.

5. Expert optimism and public concern exist in different realities

Seventy-six percent of AI experts believe the technology will benefit them; 24% of the public agrees. This is not simply a knowledge gap — it reflects different lived experiences of risk and reward.

6. Regulation is everywhere too late and too fragmented

The EU AI Act won't be fully applicable until 2027. The United States has no federal framework and is actively deregulating. The pattern across all four dimensions is reactive governance — addressing harms after they manifest rather than before.

What emerges from the totality of evidence is not a story of salvation or catastrophe but something more unsettling: a technology being deployed faster than our institutions, relationships, and cognitive tools can adapt to it. The question is not whether AI will be transformative — that is already settled. The question is whether the transformation will be shaped by intentional choices or by the default logic of deployment speed and market incentive.

Share Your Voice

Join the conversation to share your thoughts and help others understand this topic better.

Join the ConversationCommunity Feedback

No comments yet. Be the first to share your thoughts!